Human creators stand to benefit as AI rewrites the rules of content creation

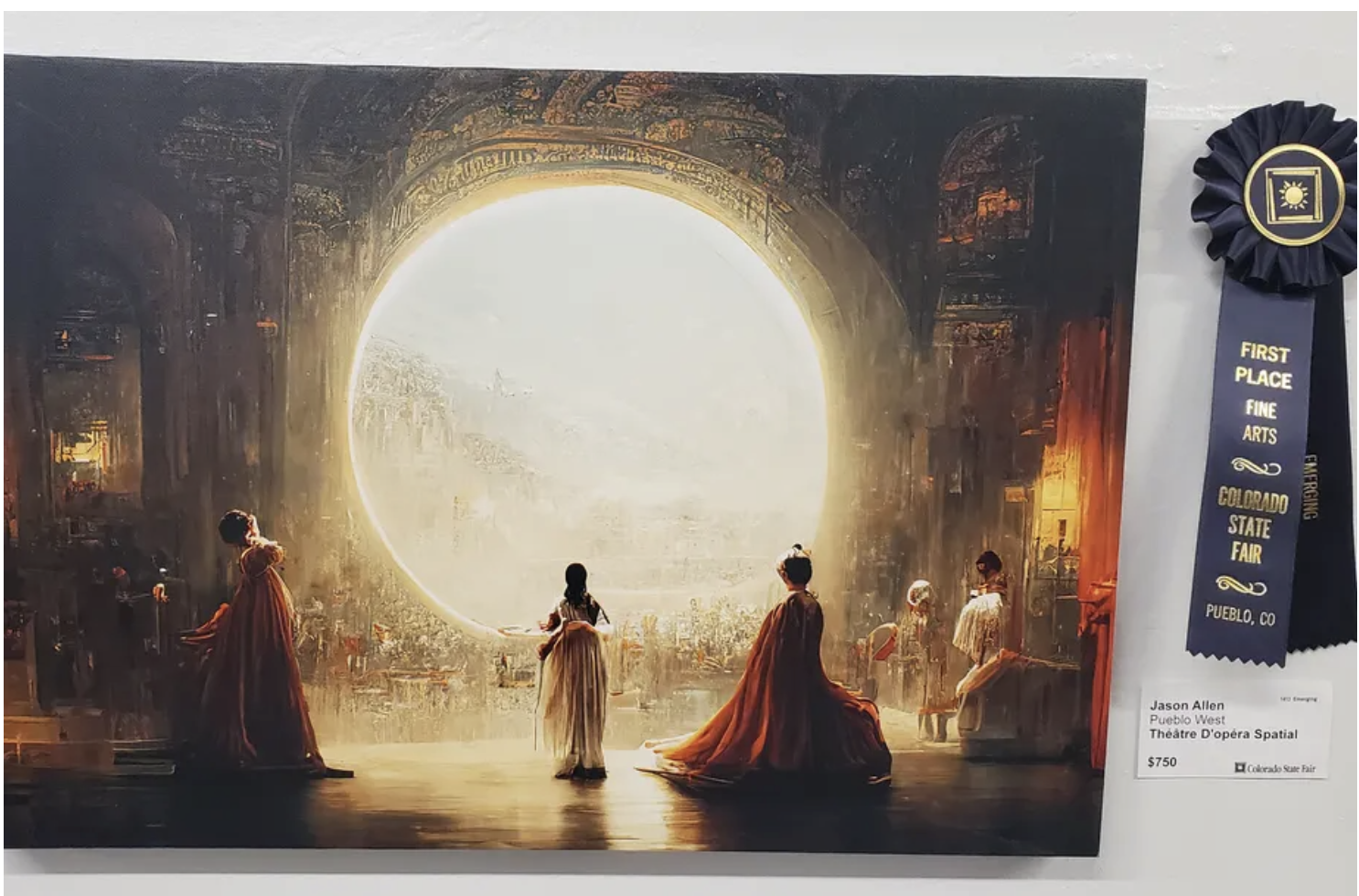

For years, the 150-year-old Colorado State Fair has held its fine art competition under little media glare. But when it announced the 2022 winners in August, this little-known local event immediately sparked controversy around the globe. Judges had picked synthetic media artist Jason Allen’s artificial intelligence-generated work “Théâtre D’opéra Spatial” as the winner in the digital category. The decision triggered a slew of criticisms on Twitter, including one proclaiming it was the “death of artistry.” Yet others expressed concern that technology could one day put artists out of work.

Until recently, machines—traditionally seen as predictable and lacking spontaneity—would hardly be associated with creativity. However, artificial intelligence (AI) has brought the creative industry to an inflection point: AI-powered machines are becoming a key part of the generative and creative process. And Allen’s artwork, which depicts a surrealistic scene of a “space opera theater,” as its title suggests, not only demonstrates the ability of machines today to create images, but also represents their potential in lifting human creativity.

A game-changer for content creation

Among the AI-related technologies to have emerged in the past several years is generative AI—deep-learning algorithms that allow computers to generate original content, such as text, images, video, audio, and code. And demand for such content will likely jump in the coming years—Gartner predicts that by 2025, generative AI will account for 10% of all data created, compared with 1% in 2022.

“Théâtre D’opéra Spatial” is an example of AI-generated content (AIGC), created with the Midjourney text-to-art generator program. Several other AI-driven art-generating programs have also emerged in 2022, capable of creating paintings from single-line text prompts. The diversity of technologies reflects a wide range of artistic styles and different user demands. DALL-E 2 and Stable Diffusion, for instance, are focused mainly on western-style artwork, while Baidu’s ERNIE-ViLG and Wenxin Yige produce images influenced by Chinese aesthetics. At Baidu’s deep learning developer conference Wave Summit+ 2022, the company announced that Wenxin Yige has been updated with new features, including turning photos into AI-generated art, image editing, and one-click video production.

Meanwhile, AIGC can also include articles, videos, and various other media offerings such as voice synthesis. A technology that generates audible speech indistinguishable from the voice of the original speaker, voice synthesis can be applied in many scenarios, including voice navigation for digital maps. Baidu Maps, for example, allows users to customize its voice navigation to their own voice just by recording nine sentences.

Recent advances in AI technologies have also created generative language models that can fluently compose texts with just one click. They can be used for generating marketing copy, processing documents, extracting summaries, and other text tasks, unlocking creativity that other technologies such as voice synthesis have failed to tap. One of the leading generative language models is Baidu’s ERNIE 3.0, which has been widely applied in various industries such as health care, education, technology, and entertainment.

“In the past year, artificial intelligence has made a great leap and changed its technological direction,” says Robin Li, CEO of Baidu. “Artificial intelligence has gone from understanding pictures and text to generating content.” Going one step further, Baidu App, a popular search and newsfeed app with over 600 million monthly users, including five million content creators, recently released a video editing feature that can produce a short video accompanied by a voiceover created from data provided in an article.

Improving efficiency and growth

As AIGC becomes increasingly common, it could make content creation more efficient by getting rid of repetitive, time-intensive tasks for creators such as sorting out source assets and voice recordings and rendering images. Aspiring filmmakers, for instance, have long had to pay their dues by spending countless hours mastering the complex and tedious process of video editing. AIGC may soon make that unnecessary.

Besides boosting efficiency, AIGC could also increase business growth in content creation amid rising demand for personalized digital content that users can interact with dynamically. InsightSLICE forecasts that the global digital creation market will on average grow 12% annually between 2020 and 2030 and hit $38.2 billion. With content consumption fast outpacing production, traditional development methods will likely struggle to meet such increasing demand, creating a gap that could be filled by AIGC. “AI has the potential to meet this massive demand for content at a tenth of the cost and a hundred times or thousands of times faster in the next decade,” Li says.

AI with humanity as its foundation

AIGC can also serve as an educational tool by helping children develop their creativity. StoryDrawer, for instance, is an AI-driven program designed to boost children’s creative thinking, which often declines as the focus in their education shifts to rote learning.

Developed by Zhejiang University using Baidu’s AI algorithms, the program stimulates children’s imagination through visual storytelling. When a child describes an imaginary picture to the system, it in turn generates the image based on the description while providing verbal prompts to encourage and inspire the child to expand on the image. This is based on the belief that children exercise their creative thinking better when drawing while verbalizing than simply drawing alone. As the team continues to develop the program, they see StoryDrawer’s strong potential in helping autistic children develop speech and description skills.

Behind StoryDrawer is the Chinese adage, “以人为本,” which means “humanity as the foundation.” This motto has guided the Zhejiang University team in developing their AI art-generation system. They believe that any development of AI should seek to empower humans rather than replace them, and this core value is the key to unlocking the true potential of a promising but often misunderstood technology.

Redefining human potential in creation

Looking ahead, Robin Li foresees three main development stages for AI. First is the “assistant stage,” in which AI helps humans to generate content like audiobooks. Next is the “cooperation stage,” where AIGC appears in the form of virtual avatars coexisting in reality with creators. The final stage is the “original creation stage,” when AI generates content independently.

As with every new technology, it’s anybody’s guess how AIGC will fully unfold and evolve. While there’s plenty of uncertainty, history has proven that it is rare for any new technology to completely replace its predecessors. When the camera was first invented in the 1800s, it was criticized by many because photographs were seen as inauthentic, as the automated systems seemingly replaced skilled artists with years of experience in making realistic paintings. Yet painting remains a cornerstone of the art world today.

Just as past technologies have helped expand art beyond the domain of a privileged few, the accessibility of AIGC is set to put the power of creativity into the hands of more people, enabling them to participate in high-value content creation. In the process, as it challenges long-held assumptions about art, AIGC is also redefining what it means to be an artist.

Learn how Baidu’s ERNIE-ViLG can bring ideas to life through text prompts.

This content was produced by Baidu. It was not written by MIT Technology Review’s editorial staff.