How AI is helping historians better understand our past

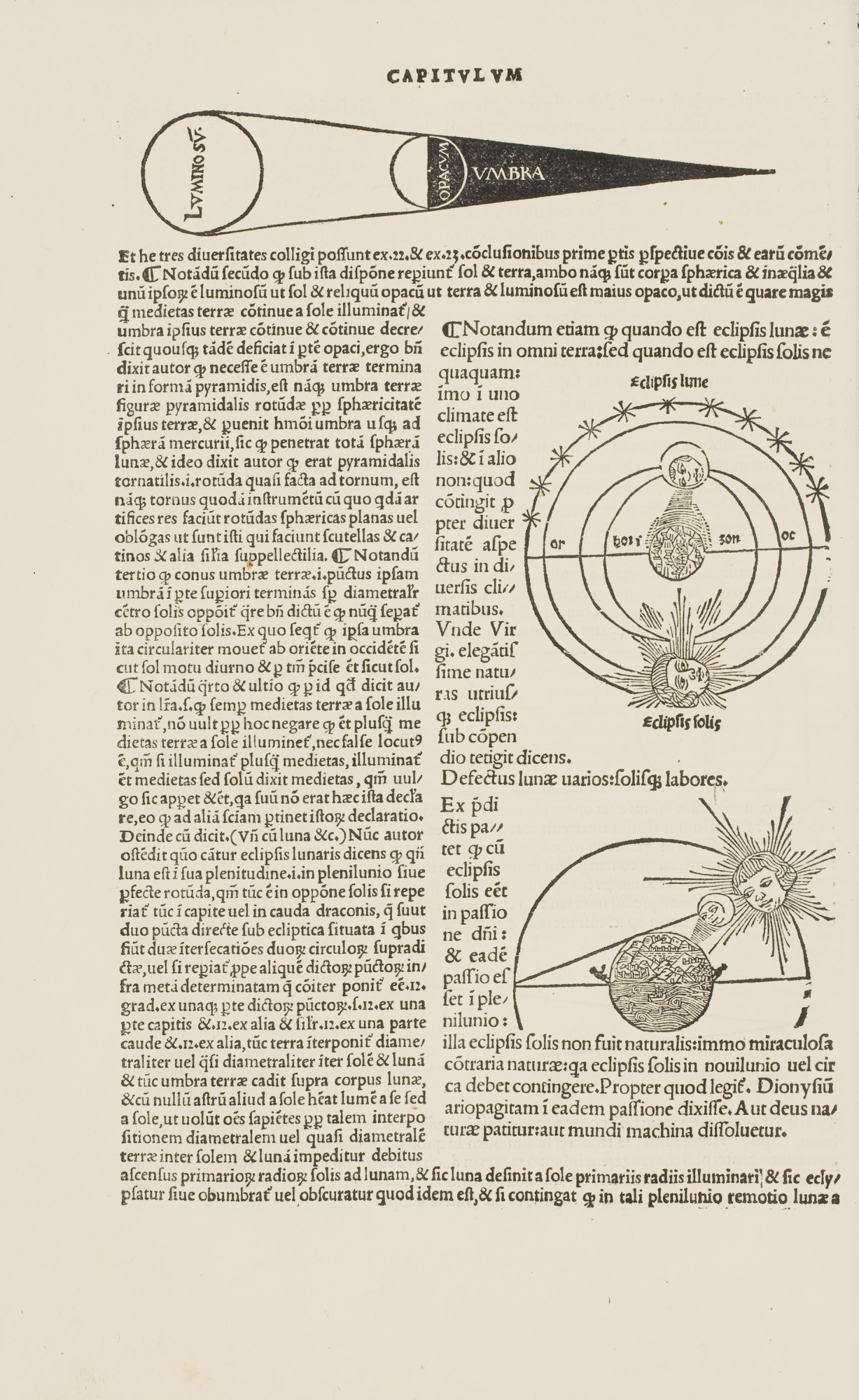

It’s an evening in 1531, in the city of Venice. In a printer’s workshop, an apprentice labors over the layout of a page that’s destined for an astronomy textbook—a dense line of type and a woodblock illustration of a cherubic head observing shapes moving through the cosmos, representing a lunar eclipse.

Like all aspects of book production in the 16th century, it’s a time-consuming process, but one that allows knowledge to spread with unprecedented speed.

Five hundred years later, the production of information is a different beast entirely: terabytes of images, video, and text in torrents of digital data that circulate almost instantly and have to be analyzed nearly as quickly, allowing—and requiring—the training of machine-learning models to sort through the flow. This shift in the production of information has implications for the future of everything from art creation to drug development.

But those advances are also making it possible to look differently at data from the past. Historians have started using machine learning—deep neural networks in particular—to examine historical documents, including astronomical tables like those produced in Venice and other early modern cities, smudged by centuries spent in mildewed archives or distorted by the slip of a printer’s hand.

Historians say the application of modern computer science to the distant past helps draw connections across a broader swath of the historical record than would otherwise be possible, correcting distortions that come from analyzing history one document at a time. But it introduces distortions of its own, including the risk that machine learning will slip bias or outright falsifications into the historical record. All this adds up to a question for historians and others who, it’s often argued, understand the present by examining history: With machines set to play a greater role in the future, how much should we cede to them of the past?

Parsing complexity

Big data has come to the humanities throughinitiatives to digitize increasing numbers of historical documents, like the Library of Congress’s collection of millions of newspaper pages and the Finnish Archives’ court records dating back to the 19th century. For researchers, this is at once a problem and an opportunity: there is much more information, and often there has been no existing way to sift through it.

That challenge has been met with the development of computational tools that help scholars parse complexity. In 2009, Johannes Preiser-Kapeller, a professor at the Austrian Academy of Sciences, was examining a registry of decisions from the 14th-century Byzantine Church. Realizing that making sense of hundreds of documents would require a systematic digital survey of bishops’ relationships, Preiser-Kapeller built a database of individuals and used network analysis software to reconstruct their connections.

This reconstruction revealed hidden patterns of influence, leading Preiser-Kapeller to argue that the bishops who spoke the most in meetings weren’t the most influential; he’s since applied the technique to other networks, including the 14th-century Byzantian elite, uncovering ways in which its social fabric was sustained through the hidden contributions of women. “We were able to identify, to a certain extent, what was going on outside the official narrative,” he says.

Preiser-Kapeller’s work is but one example of this trend in scholarship. But until recently, machine learning has often been unable to draw conclusions from ever larger collections of text—not least because certain aspects of historical documents (in Preiser-Kapeller’s case, poorly handwritten Greek) made them indecipherable to machines. Now advances in deep learning have begun to address these limitations, using networks that mimic the human brain to pick out patterns in large and complicated data sets.

Nearly 800 years ago, the 13th-century astronomer Johannes de Sacrobosco published the Tractatus de sphaera, an introductory treatise on the geocentric cosmos. That treatise became required reading for early modern university students. It was the most widely distributed textbook on geocentric cosmology, enduring even after the Copernican revolution upended the geocentric view of the cosmos in the 16th century.

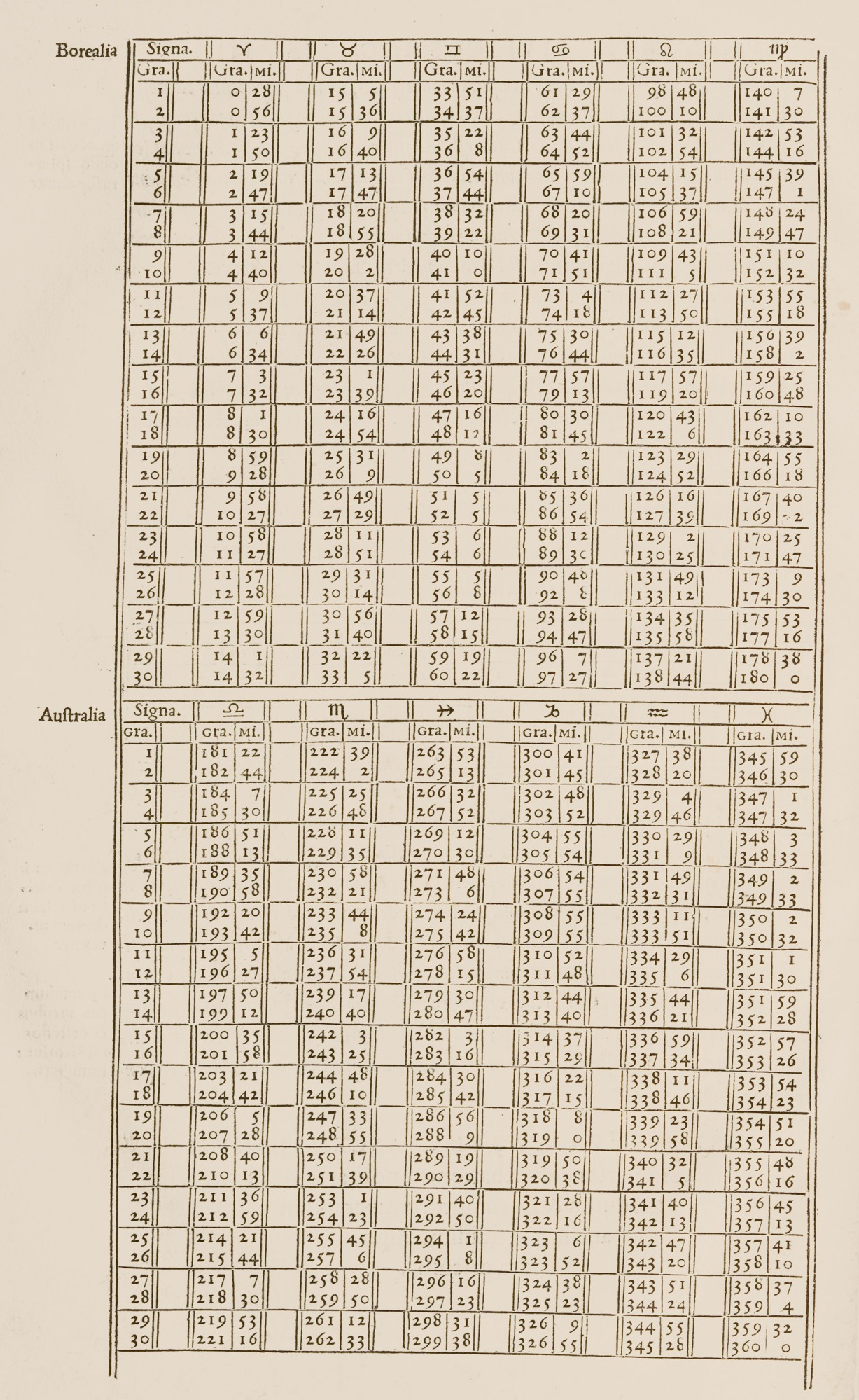

The treatise is also the star player in a digitized collection of 359 astronomy textbooks published between 1472 and 1650—76,000 pages, including tens of thousands of scientific illustrations and astronomical tables. In that comprehensive data set, Matteo Valleriani, a professor with the Max Planck Institute for the History of Science, saw an opportunity to trace the evolution of European knowledge toward a shared scientific worldview. But he realized that discerning the pattern required more than human capabilities. So Valleriani and a team of researchers at the Berlin Institute for the Foundations of Learning and Data (BIFOLD) turned to machine learning.

This required dividing the collection into three categories: text parts (sections of writing on a specific subject, with a clear beginning and end); scientific illustrations, which helped illuminate concepts such as a lunar eclipse; and numerical tables, which were used to teach mathematical aspects of astronomy.

All this adds up to a question for historians: With machines set to play a greater role in the future, how much should we cede to them of the past?

At the outset, Valleriani says, the text defied algorithmic interpretation. For one thing, typefaces varied widely; early modern print shops developed unique ones for their books and often had their own metallurgic workshops to cast their letters. This meant that a model using natural-language processing (NLP) to read the text would need to be retrained for each book.

The language also posed a problem. Many texts were written in regionally specific Latin dialects often unrecognizable to machines that haven’t been trained on historical languages. “This is a big limitation in general for natural-language processing, when you don’t have the vocabulary to train in the background,” says Valleriani. This is part of the reason NLP works well for dominant languages like English but is less effective on, say, ancient Hebrew.

Instead, researchers manually extracted the text from the source materials and identified single links between sets of documents—for instance, when a text was imitated or translated in another book. This data was placed in a graph, which automatically embedded those single links in a network containing all the records (researchers then used a graph to train a machine-learning method that can suggest connections between texts). That left the visual elements of the texts: 20,000 illustrations and 10,000 tables, which researchers used neural networks to study.

Present tense

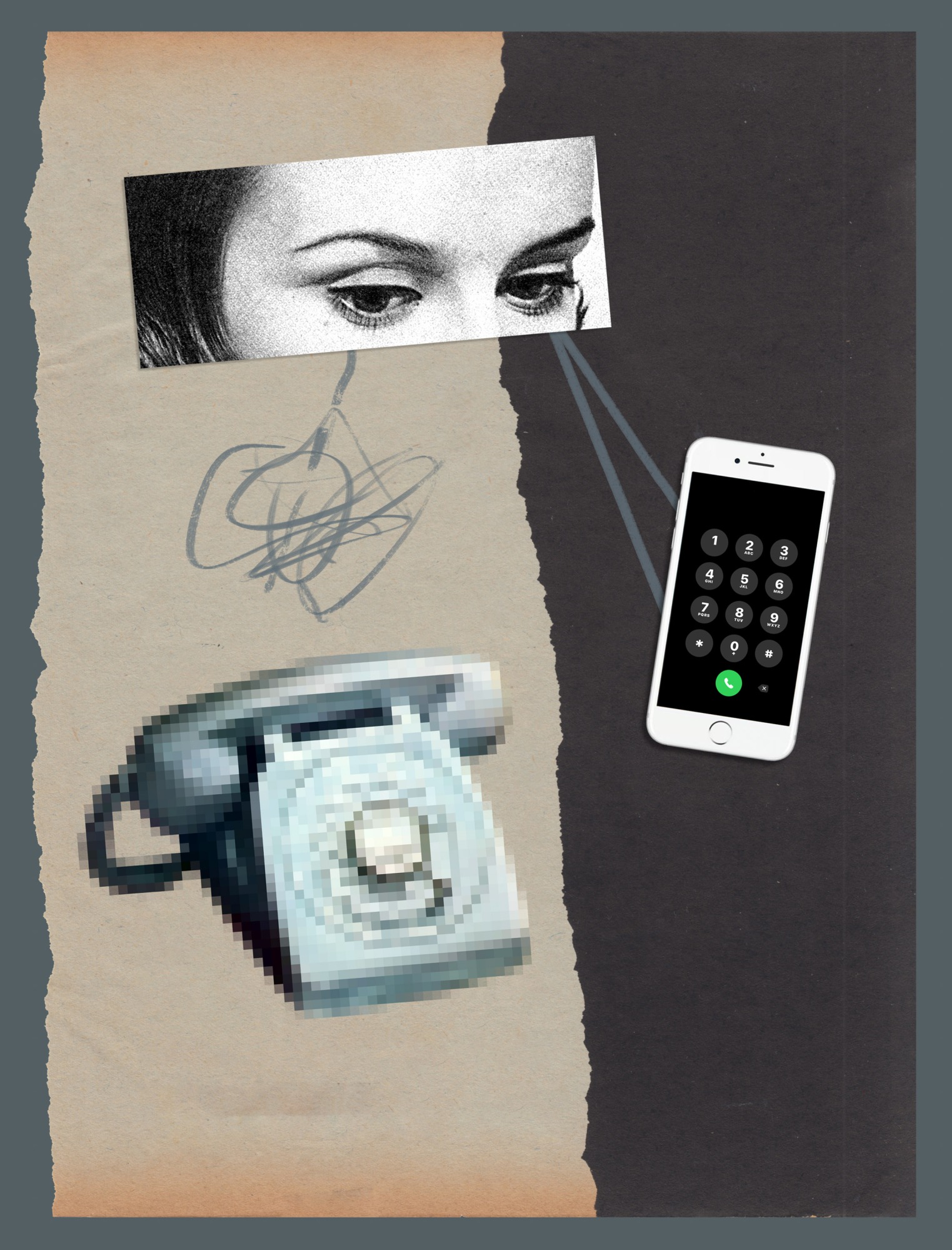

Computer vision for historical images faces similar challenges to NLP; it has what Lauren Tilton, an associate professor of digital humanities at the University of Richmond, calls a “present-ist” bias. Many AI models are trained on data sets from the last 15 years, says Tilton, and the objects they’ve learned to list and identify tend to be features of contemporary life, like cell phones or cars. Computers often recognize only contemporary iterations of objects that have a longer history—think iPhones and Teslas, rather than switchboards and Model Ts. To top it off, models are typically trained on high-resolution color images rather than the grainy black-and-white photographs of the past (or early modern depictions of the cosmos, inconsistent in appearance and degraded by the passage of time). This all makes computer vision less accurate when applied to historical images.

“We’ll talk to computer science folks, and they’ll say, ‘Well, we solved object detection,’” she says. “And we’ll say, actually, if you take a set of photos from the 1930s, you’re going to see it hasn’t quite been as solved as we think.” Deep-learning models, which can identify patterns in large quantities of data, can help because they’re capable of greater abstraction.

In the case of the Sphaeraproject, BIFOLD researchers trained a neural network to detect, classify, and cluster (according to similarity) illustrations from early modern texts; that model is now accessible to other historians via a public web service called CorDeep. They also took a novel approach to analyzing other data. For example, various tables found throughout the hundreds of books in the collection couldn’t be compared visually because “the same table can be printed 1,000 different ways,” Valleriani explains. So researchers developed a neural network architecture that detects and clusters similar tables on the basis of the numbers they contain, ignoring their layout.

So far, the project has yielded some surprising results. One pattern found in the data allowed researchers to see that while Europe was fracturing along religious lines after the Protestant Reformation, scientific knowledge was coalescing. The scientific texts being printed in places such as the Protestant city of Wittenberg, which had become a center for scholarly innovation thanks to the work of Reformed scholars, were being imitated in hubs like Paris and Venice before spreading across the continent. The Protestant Reformation isn’t exactly an understudied subject, Valleriani says, but a machine-mediated perspective allowed researchers to see something new: “This was absolutely not clear before.” Models applied to the tables and images have started to return similar patterns.

Computers often recognize only contemporary iterations of objects that have a longer history—think iPhones and Teslas, rather than switchboards and Model Ts.

These tools offer possibilities more significant than simply keeping track of 10,000 tables, says Valleriani. Instead, they allow researchers to draw inferences about the evolution of knowledge from patterns in clusters of records even if they’ve actually examined only a handful of documents. “By looking at two tables, I can already make a huge conclusion about 200 years,” he says.

Deep neural networks are also playing a role in examining even older history. Deciphering inscriptions (known as epigraphy) and restoring damaged examples are painstaking tasks, especially when inscribed objects have been moved or are missing contextual cues. Specialized historians need to make educated guesses. To help, Yannis Assael, a research scientist with DeepMind, and Thea Sommerschield, a postdoctoral fellow at Ca’ Foscari University of Venice, developed a neural network called Ithaca, which can reconstruct missing portions of inscriptions and attribute dates and locations to the texts. Researchers say the deep-learning approach—which involved training on a data set of more than 78,000 inscriptions—is the first to address restoration and attribution jointly, through learning from large amounts of data.

So far, Assael and Sommerschield say, the approach is shedding light on inscriptions of decrees from an important period in classical Athens, which have long been attributed to 446 and 445 BCE—a date that some historians have disputed. As a test, researchers trained the model on a data set that did not contain the inscription in question, and then asked it to analyze the text of the decrees. This produced a different date. “Ithaca’s average predicted date for the decrees is 421 BCE, aligning with the most recent dating breakthroughs and showing how machine learning can contribute to debates around one of the most significant moments in Greek history,” they said by email.

Time machines

Other projects propose to use machine learning to draw even broader inferences about the past. This was the motivation behind the Venice Time Machine, one of several local “time machines” across Europe that have now been established to reconstruct local history from digitized records. The Venetian state archives cover 1,000 years of history spread across 80 kilometers of shelves; the researchers’ aim was to digitize these records, many of which had never been examined by modern historians. They would use deep-learning networks to extract information and, by tracing names that appear in the same document across other documents, reconstruct the ties that once bound Venetians.

Frédéric Kaplan, president of the Time Machine Organization, says the project has now digitized enough of the city’s administrative documents to capture the texture of the city in centuries past, making it possible to go building by building and identify the families who lived there at different points in time. “These are hundreds of thousands of documents that need to be digitized to reach this form of flexibility,” says Kaplan. “This has never been done before.”

Still, when it comes to the project’s ultimate promise—no less than a digital simulation of medieval Venice down to the neighborhood level, through networks reconstructed by artificial intelligence—historians like Johannes Preiser-Kapeller, the Austrian Academy of Sciences professor who ran the study of Byzantine bishops, say the project hasn’t been able to deliver because the model can’t understand which connections are meaningful.

Preiser-Kapeller has done his own experiment using automatic detection to develop networks from documents—extracting network information with an algorithm, rather than having an expert extract information to feed into the network as in his work on the bishops—and says it produces a lot of “artificial complexity” but nothing that serves in historical interpretation. The algorithm was unable to distinguish instances where two people’s names appeared on the same roll of taxpayers from cases where they were on a marriage certificate, so as Preiser-Kapeller says, “What you really get has no explanatory value.” It’s a limitation historians have highlighted with machine learning, similar to the point people have made about large language models like ChatGPT: because models ultimately don’t understand what they’re reading, they can arrive at absurd conclusions.

It’s true that with the sources that are currently available, human interpretation is needed to provide context, says Kaplan, though he thinks this could change once a sufficient number of historical documents are made machine readable.

But he imagines an application of machine learning that’s more transformational—and potentially more problematic. Generative AI could be used to make predictions that flesh out blank spots in the historical record—for instance, about the number of apprentices in a Venetian artisan’s workshop—based not on individual records, which could be inaccurate or incomplete, but on aggregated data. This may bring more non-elite perspectives into the picture but runs counter to standard historical practice, in which conclusions are based on available evidence.

Still, a more immediate concern is posed by neural networks that create false records.

Is it real?

On YouTube, viewers can now watch Richard Nixon make a speech that had been written in case the 1969 moon landing ended in disaster but fortunately never needed to be delivered. Researchers created the deepfake to show how AI could affect our shared sense of history. In seconds, one can generate false images of major historical events like the D-Day landings, as Northeastern history professor Dan Cohen discussed recently with students in a class dedicated to exploring the way digital media and technology are shaping historical study. “[The photos are] entirely convincing,” he says. “You can stick a whole bunch of people on a beach and with a tank and a machine gun, and it looks perfect.”

False history is nothing new—Cohen points to the way Joseph Stalin ordered enemies to be erased from history books, as an example—but the scale and speed with which fakes can be created is breathtaking, and the problem goes beyond images. Generative AI can create texts that read plausibly like a parliamentary speech from the Victorian era, as Cohen has done with his students. By generating historical handwriting or typefaces, it could also create what looks convincingly like a written historical record.

Meanwhile, AI chatbots like Character.ai and Historical Figures Chat allow users to simulate interactions with historical figures. Historians have raised concerns about these chatbots, which may, for example, make some individuals seem less racist and more remorseful than they actually were.

In other words, there’s a risk that artificial intelligence, from historical chatbots to models that make predictions based on historical records, will get things very wrong. Some of these mistakes are benign anachronisms: a query to Aristotle on the chatbot Character.ai about his views on women (whom he saw as inferior) returned an answer that they should “have no social media.” But others could be more consequential—especially when they’re mixed into a collection of documents too large for a historian to be checking individually, or if they’re circulated by someone with an interest in a particular interpretation of history.

Even if there’s no deliberate deception, some scholars have concerns that historians may use tools they’re not trained to understand. “I think there’s great risk in it, because we as humanists or historians are effectively outsourcing analysis to another field, or perhaps a machine,” says Abraham Gibson, a history professor at the University of Texas at San Antonio. Gibson says until very recently, fellow historians he spoke to didn’t see the relevance of artificial intelligence to their work, but they’re increasingly waking up to the possibility that they could eventually yield some of the interpretation of history to a black box.

This “black box” problem is not unique to history: even developers of machine-learning systems sometimes struggle to understand how they function. Fortunately, some methods designed with historians in mind are structured to provide greater transparency. Ithaca produces a range of hypotheses ranked by probability, and BIFOLD researchers are working on the interpretation of their models with explainable AI, which is meant to reveal which inputs contribute most to predictions. Historians say they themselves promote transparency by encouraging people to view machine learning with critical detachment: as a useful tool, but one that’s fallible, just like people.

The historians of tomorrow

While skepticism toward such new technology persists, the field is gradually embracing it, and Valleriani thinks that in time, the number of historians who reject computational methods will dwindle. Scholars’ concerns about the ethics of AI are less a reason not to use machine learning, he says, than an opportunity for the humanities to contribute to its development.

As the French historian Emmanuel Le Roy Ladurie wrote in 1968, in response to the work of historians who had started experimenting with computational history to investigate questions such as voting patterns of the British parliament in the 1840s, “the historian of tomorrow will be a programmer, or he will not exist.”

Moira Donovan is an independent science journalist based in Halifax, Nova Scotia.