Generative AI is changing everything. But what’s left when the hype is gone?

It was clear that OpenAI was on to something. In late 2021, a small team of researchers was playing around with an idea at the company’s San Francisco office. They’d built a new version of OpenAI’s text-to-image model, DALL-E, an AI that converts short written descriptions into pictures: a fox painted by Van Gogh, perhaps, or a corgi made of pizza. Now they just had to figure out what to do with it.

“Almost always, we build something and then we all have to use it for a while,” Sam Altman, OpenAI’s cofounder and CEO, tells MIT Technology Review. “We try to figure out what it’s going to be, what it’s going to be used for.”

Not this time. As they tinkered with the model, everyone involved realized this was something special. “It was very clear that this was it—this was the product,” says Altman. “There was no debate. We never even had a meeting about it.”

But nobody—not Altman, not the DALL-E team—could have predicted just how big a splash this product was going to make. “This is the first AI technology that has caught fire with regular people,” says Altman.

DALL-E 2 dropped in April 2022. In May, Google announced (but did not release) two text-to-image models of its own, Imagen and Parti. Then came Midjourney, a text-to-image model made for artists. And August brought Stable Diffusion, an open-source model that the UK-based startup Stability AI has released to the public for free.

The doors were off their hinges. OpenAI signed up a million users in just 2.5 months. More than a million people started using Stable Diffusion via its paid-for service Dream Studio in less than half that time; many more used Stable Diffusion through third-party apps or installed the free version on their own computers. (Emad Mostaque, Stability AI’s founder, says he’s aiming for a billion users.)

And then in October we had Round Two: a spate of text-to-video models from Google, Meta, and others. Instead of just generating still images, these can create short video clips, animations, and 3D pictures.

The pace of development has been breathtaking. In just a few months, the technology has inspired hundreds of newspaper headlines and magazine covers, filled social media with memes, kicked a hype machine into overdrive—and set off an intense backlash.

This story is part of our upcoming 10 Breakthrough Technologies 2023 series. Sign up for The Download to get the full list in January.

“The shock and awe of this technology is amazing—and it’s fun, it’s what new technology should be,” says Mike Cook, an AI researcher at King’s College London who studies computational creativity. “But it’s moved so fast that your initial impressions are being updated before you even get used to the idea. I think we’re going to spend a while digesting it as a society.”

Artists are caught in the middle of one of the biggest upheavals in a generation. Some will lose work; some will find new opportunities. A few are headed to the courts to fight legal battles over what they view as the misappropriation of images to train models that could replace them.

Creators were caught off guard, says Don Allen Stevenson III, a digital artist based in California who has worked at visual-effects studios such as DreamWorks. “For technically trained folks like myself, it’s very scary. You’re like, ‘Oh my god—that’s my whole job,’” he says. “I went into an existential crisis for the first month of using DALL-E.”

But while some are still reeling from the shock, many—including Stevenson—are finding ways to work with these tools and anticipate what comes next.

The exciting truth is, we don’t really know. For while creative industries—from entertainment media to fashion, architecture, marketing, and more—will feel the impact first, this tech will give creative superpowers to everybody. In the longer term, it could be used to generate designs for almost anything, from new types of drugs to clothes and buildings. The generative revolution has begun.

A magical revolution

For Chad Nelson, a digital creator who has worked on video games and TV shows, text-to-image models are a once-in-a-lifetime breakthrough. “This tech takes you from that lightbulb in your head to a first sketch in seconds,” he says. “The speed at which you can create and explore is revolutionary—beyond anything I’ve experienced in 30 years.”

Coming soon:

A new report about how industrial design and engineering firms are using generative AI.

Sign up to get notified when it’s out.

Within weeks of their debut, people were using these tools to prototype and brainstorm everything from magazine illustrations and marketing layouts to video-game environments and movie concepts. People generated fan art, even whole comic books, and shared them online in the thousands. Altman even used DALL-E to generate designs for sneakers that someone then made for him after he tweeted the image.

Amy Smith, a computer scientist at Queen Mary University of London and a tattoo artist, has been using DALL-E to design tattoos. “You can sit down with the client and generate designs together,” she says. “We’re in a revolution of media generation.”

Paul Trillo, a digital and video artist based in California, thinks the technology will make it easier and faster to brainstorm ideas for visual effects. “People are saying this is the death of effects artists, or the death of fashion designers,” he says. “I don’t think it’s the death of anything. I think it means we don’t have to work nights and weekends.”

Stock image companies are taking different positions. Getty has banned AI-generated images. Shutterstock has signed a deal with OpenAI to embed DALL-E in its website and says it will start a fund to reimburse artists whose work has been used to train the models.

Stevenson says he has tried out DALL-E at every step of the process that an animation studio uses to produce a film, including designing characters and environments. With DALL-E, he was able to do the work of multiple departments in a few minutes. “It’s uplifting for all the folks who’ve never been able to create because it was too expensive or too technical,” he says. “But it’s terrifying if you’re not open to change.”

Nelson thinks there’s still more to come. Eventually, he sees this technology being embraced not only by media giants but also by architecture and design firms. It’s not ready yet, though, he says.

“Right now it’s like you have a little magic box, a little wizard,” he says. That’s great if you just want to keep generating images, but not if you need a creative partner. “If I want it to create stories and build worlds, it needs far more awareness of what I’m creating,” he says.

That’s the problem: these models still have no idea what they’re doing.

Inside the black box

To see why, let’s look at how these programs work. From the outside, the software is a black box. You type in a short description—a prompt—and then wait a few seconds. What you get back is a handful of images that fit that prompt (more or less). You may have to tweak your text to coax the model to produce something closer to what you had in mind, or to hone a serendipitous result. This has become known as prompt engineering.

Prompts for the most detailed, stylized images can run to several hundred words, and wrangling the right words has become a valuable skill. Online marketplaces have sprung up where prompts known to produce desirable results are bought and sold.

Prompts can contain phrases that instruct the model to go for a particular style: “trending on ArtStation” tells the AI to mimic the (typically very detailed) style of images popular on ArtStation, a website where thousands of artists showcase their work; “Unreal engine” invokes the familiar graphic style of certain video games; and so on. Users can even enter the names of specific artists and have the AI produce pastiches of their work, which has made some artists very unhappy.

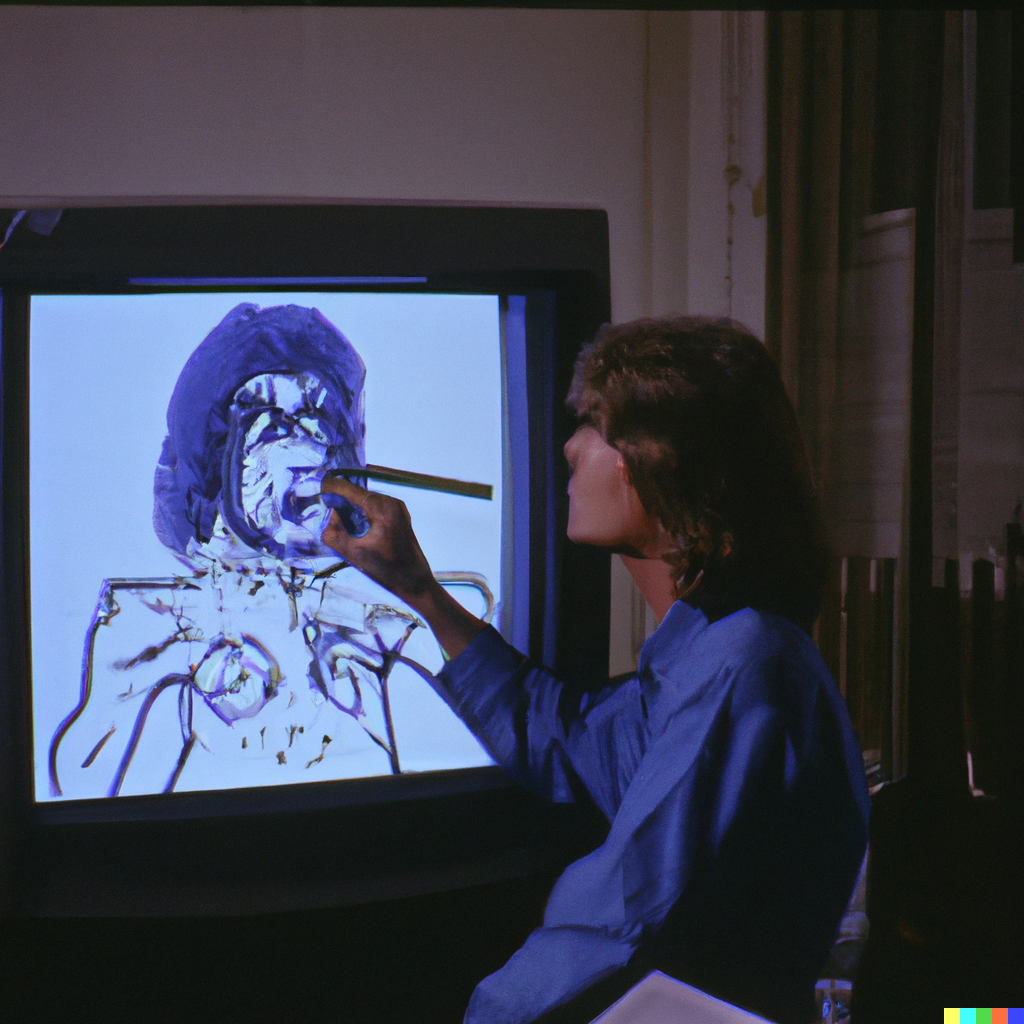

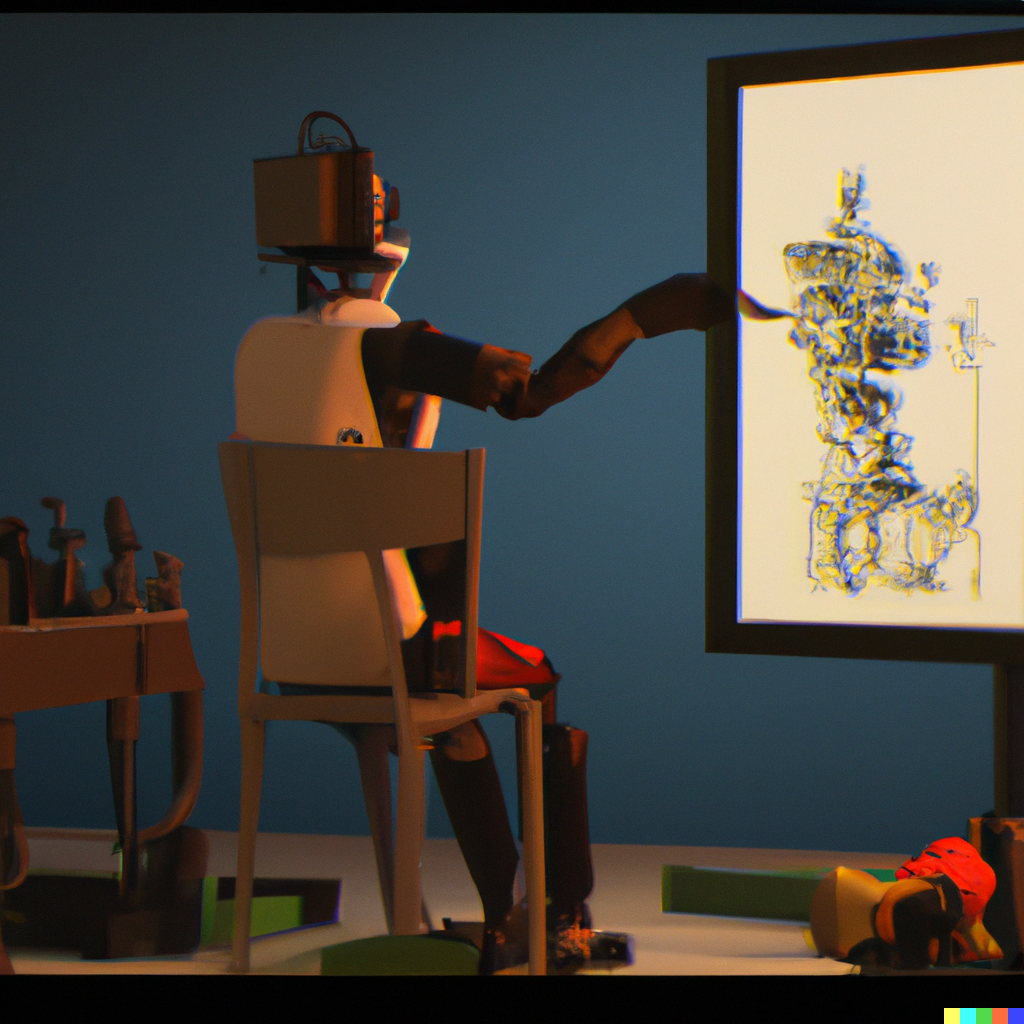

“I tried to metaphorically represent AI with the prompt ‘the Big Bang’ and ended up with these abstract bubble-like forms (right). It wasn’t exactly what I wanted, so then I went more literal with ‘explosion in outer space 1980s photograph’ (left), which seemed too aggressive. I also tried growing some digital plants by putting in ‘plant 8-bit pixel art’ (center).”

Under the hood, text-to-image models have two key components: one neural network trained to pair an image with text that describes that image, and another trained to generate images from scratch. The basic idea is to get the second neural network to generate an image that the first neural network accepts as a match for the prompt.

The big breakthrough behind the new models is in the way images get generated. The first version of DALL-E used an extension of the technology behind OpenAI’s language model GPT-3, producing images by predicting the next pixel in an image as if they were words in a sentence. This worked, but not well. “It was not a magical experience,” says Altman. “It’s amazing that it worked at all.”

Instead, DALL-E 2 uses something called a diffusion model. Diffusion models are neural networks trained to clean images up by removing pixelated noise that the training process adds. The process involves taking images and changing a few pixels in them at a time, over many steps, until the original images are erased and you’re left with nothing but random pixels. “If you do this a thousand times, eventually the image looks like you have plucked the antenna cable from your TV set—it’s just snow,” says Björn Ommer, who works on generative AI at the University of Munich in Germany and who helped build the diffusion model that now powers Stable Diffusion.

The neural network is then trained to reverse that process and predict what the less pixelated version of a given image would look like. The upshot is that if you give a diffusion model a mess of pixels, it will try to generate something a little cleaner. Plug the cleaned-up image back in, and the model will produce something cleaner still. Do this enough times and the model can take you all the way from TV snow to a high-resolution picture.

AI art generators never work exactly how you want them to. They often produce hideous results that can resemble distorted stock art, at best. In my experience, the only way to really make the work look good is to add descriptor at the end with a style that looks aesthetically pleasing.

~Erik Carter

The trick with text-to-image models is that this process is guided by the language model that’s trying to match a prompt to the images the diffusion model is producing. This pushes the diffusion model toward images that the language model considers a good match.

But the models aren’t pulling the links between text and images out of thin air. Most text-to-image models today are trained on a large data set called LAION, which contains billions of pairings of text and images scraped from the internet. This means that the images you get from a text-to-image model are a distillation of the world as it’s represented online, distorted by prejudice (and pornography).

One last thing: there’s a small but crucial difference between the two most popular models, DALL-E 2 and Stable Diffusion. DALL-E 2’s diffusion model works on full-size images. Stable Diffusion, on the other hand, uses a technique called latent diffusion, invented by Ommer and his colleagues. It works on compressed versions of images encoded within the neural network in what’s known as a latent space, where only the essential features of an image are retained.

This means Stable Diffusion requires less computing muscle to work. Unlike DALL-E 2, which runs on OpenAI’s powerful servers, Stable Diffusion can run on (good) personal computers. Much of the explosion of creativity and the rapid development of new apps is due to the fact that Stable Diffusion is both open source—programmers are free to change it, build on it, and make money from it—and lightweight enough for people to run at home.

Redefining creativity

For some, these models are a step toward artificial general intelligence, or AGI—an over-hyped buzzword referring to a future AI that has general-purpose or even human-like abilities. OpenAI has been explicit about its goal of achieving AGI. For that reason, Altman doesn’t care that DALL-E 2 now competes with a raft of similar tools, some of them free. “We’re here to make AGI, not image generators,” he says. “It will fit into a broader product road map. It’s one smallish element of what an AGI will do.”

That’s optimistic, to say the least—many experts believe that today’s AI will never reach that level. In terms of basic intelligence, text-to-image models are no smarter than the language-generating AIs that underpin them. Tools like GPT-3 and Google’s PaLM regurgitate patterns of text ingested from the many billions of documents they are trained on. Similarly, DALL-E and Stable Diffusion reproduce associations between text and images found across billions of examples online.

The results are dazzling, but poke too hard and the illusion shatters. These models make basic howlers—responding to “salmon in a river” with a picture of chopped-up fillets floating downstream, or to “a bat flying over a baseball stadium” with a picture of both a flying mammal and a wooden stick. That’s because they are built on top of a technology that is nowhere close to understanding the world as humans (or even most animals) do.

Even so, it may be just a matter of time before these models learn better tricks. “People say it’s not very good at this thing now, and of course it isn’t,” says Cook. “But a hundred million dollars later, it could well be.”

That’s certainly OpenAI’s approach.

“We already know how to make it 10 times better,” says Altman. “We know there are logical reasoning tasks that it messes up. We’re going to go down a list of things, and we’ll put out a new version that fixes all of the current problems.”

If claims about intelligence and understanding are overblown, what about creativity? Among humans, we say that artists, mathematicians, entrepreneurs, kindergarten kids, and their teachers are all exemplars of creativity. But getting at what these people have in common is hard.

For some, it’s the results that matter most. Others argue that the way things are made—and whether there is intent in that process—is paramount.

Still, many fall back on a definition given by Margaret Boden, an influential AI researcher and philosopher at the University of Sussex, UK, who boils the concept down to three key criteria: to be creative, an idea or an artifact needs to be new, surprising, and valuable.

Beyond that, it’s often a case of knowing it when you see it. Researchers in the field known as computational creativity describe their work as using computers to produce results that would be considered creative if produced by humans alone.

Smith is therefore happy to call this new breed of generative models creative, despite their stupidity. “It is very clear that there is innovation in these images that is not controlled by any human input,” she says. “The translation from text to image is often surprising and beautiful.”

Maria Teresa Llano, who studies computational creativity at Monash University in Melbourne, Australia, agrees that text-to-image models are stretching previous definitions. But Llano does not think they are creative. When you use these programs a lot, the results can start to become repetitive, she says. This means they fall short of some or all of Boden’s requirements. And that could be a fundamental limitation of the technology. By design, a text-to-image model churns out new images in the likeness of billions of images that already exist. Perhaps machine learning will only ever produce images that imitate what it’s been exposed to in the past.

That may not matter for computer graphics. Adobe is already building text-to-image generation into Photoshop; Blender, Photoshop’s open-source cousin, has a Stable Diffusion plug-in. And OpenAI is collaborating with Microsoft on a text-to-image widget for Office.

It is in this kind of interaction, in future versions of these familiar tools, that the real impact may be felt: from machines that don’t replace human creativity but enhance it. “The creativity we see today comes from the use of the systems, rather than from the systems themselves,” says Llano—from the back-and-forth, call-and-response required to produce the result you want.

This view is echoed by other researchers in computational creativity. It’s not just about what these machines do; it’s how they do it. Turning them into true creative partners means pushing them to be more autonomous, giving them creative responsibility, getting them to curate as well as create.

Aspects of that will come soon. Someone has already written a program called CLIP Interrogator that analyzes an image and comes up with a prompt to generate more images like it. Others are using machine learning to augment simple prompts with phrases designed to give the image extra quality and fidelity—effectively automating prompt engineering, a task that has only existed for a handful of months.

Meanwhile, as the flood of images continues, we’re laying down other foundations too. “The internet is now forever contaminated with images made by AI,” says Cook. “The images that we made in 2022 will be a part of any model that is made from now on.”

We will have to wait to see exactly what lasting impact these tools will have on creative industries, and on the entire field of AI. Generative AI has become one more tool for expression. Altman says he now uses generated images in personal messages the way he used to use emoji. “Some of my friends don’t even bother to generate the image—they type the prompt,” he says.

But text-to-image models may be just the start. Generative AI could eventually be used to produce designs for everything from new buildings to new drugs—think text-to-X.

“People are going to realize that technique or craft is no longer the barrier—it’s now just their ability to imagine,” says Nelson.

Computers are already used in several industries to generate vast numbers of possible designs that are then sifted for ones that might work. Text-to-X models would allow a human designer to fine-tune that generative process from the start, using words to guide computers through an infinite number of options toward results that are not just possible but desirable.

Computers can conjure spaces filled with infinite possibility. Text-to-X will let us explore those spaces using words.

“I think that’s the legacy,” says Altman. “Images, video, audio—eventually, everything will be generated. I think it is just going to seep everywhere.”