Facebook wants machines to see the world through our eyes

We take it for granted that machines can recognize what they see in photos and videos. That ability rests on large data sets like ImageNet, a hand-curated collection of millions of photos used to train most of the best image-recognition models of the last decade.

But the images in these data sets portray a world of curated objects—a picture gallery that doesn’t capture the mess of everyday life as humans experience it. Getting machines to see things as we do will take a wholly new approach. And Facebook’s AI lab wants to take the lead.

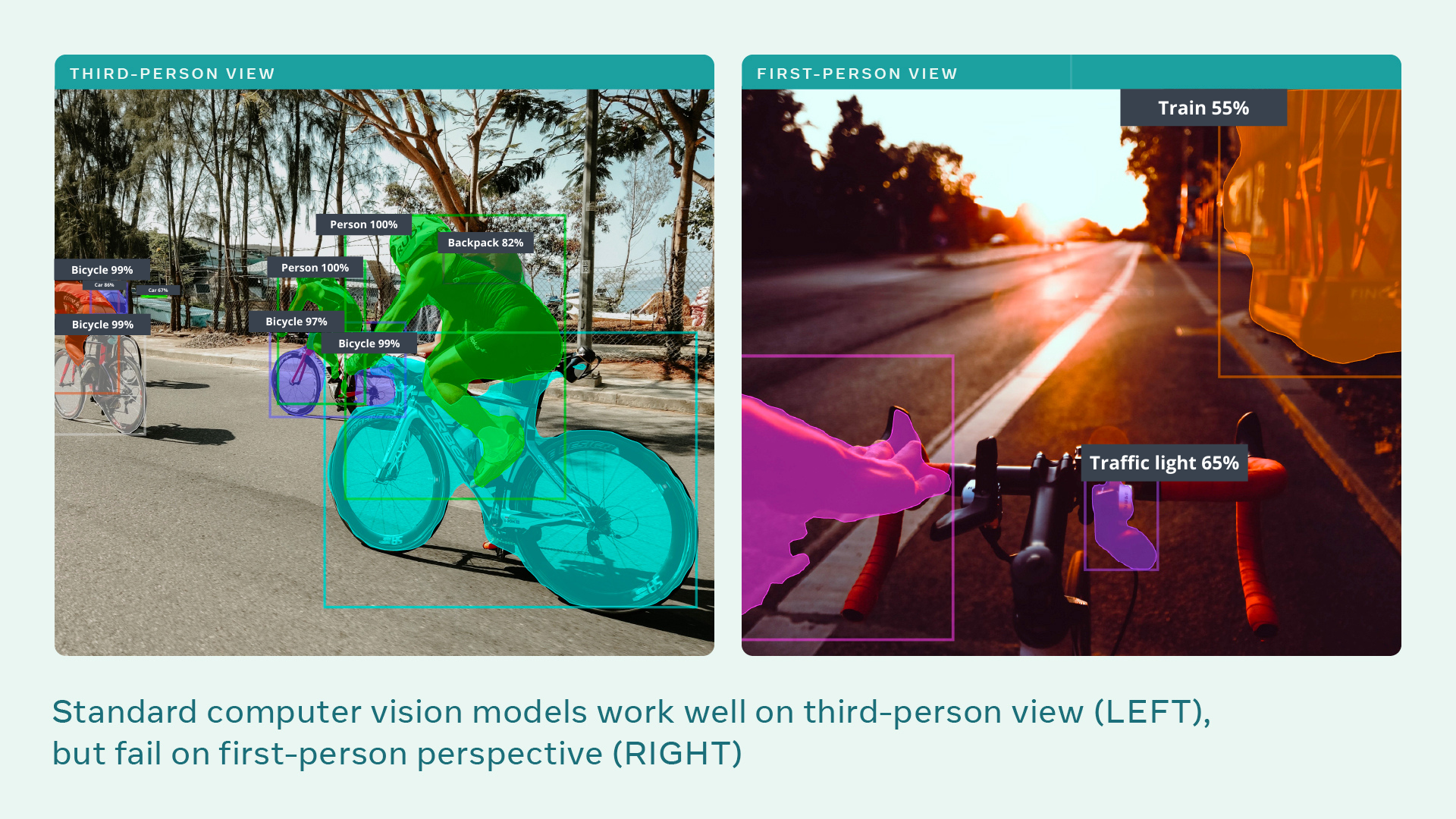

It is kick-starting a project, called Ego4D, to build AIs that can understand scenes and activities viewed from a first-person perspective—how things look to the people involved, rather than to an onlooker. Think motion-blurred GoPro footage taken in the thick of the action, instead of well-framed scenes taken by someone on the sidelines. Facebook wants Ego4D to do for first-person video what ImageNet did for photos.

For the last two years, Facebook AI Research (FAIR) has worked with 13 universities around the world to assemble the largest ever data set of first-person video—specifically to train deep-learning image-recognition models. AIs trained on the data set will be better at controlling robots that interact with people, or interpreting images from smart glasses. “Machines will be able to help us in our daily lives only if they really understand the world through our eyes,” says Kristen Grauman at FAIR, who leads the project.

Such tech could support people who need assistance around the home, or guide people in tasks they are learning to complete. “The video in this data set is much closer to how humans observe the world,” says Michael Ryoo, a computer vision researcher at Google Brain and Stony Brook University in New York, who is not involved in Ego4D.

But the potential misuses are clear and worrying. The research is funded by Facebook, a social media giant that has recently been accused in the US Senate of putting profits over people’s well-being—as corroborated by MIT Technology Review’s own investigations.

The business model of Facebook, and other Big Tech companies, is to wring as much data as possible from people’s online behavior and sell it to advertisers. The AI outlined in the project could extend that reach to people’s everyday offline behavior, revealing what objects are around your home, what activities you enjoyed, who you spent time with, and even where your gaze lingered—an unprecedented degree of personal information.

“There’s work on privacy that needs to be done as you take this out of the world of exploratory research and into something that’s a product,” says Grauman. “That work could even be inspired by this project.”

The biggest previous data set of first-person video consists of 100 hours of footage of people in the kitchen. The Ego4D data set consists of 3,025 hours of video recorded by 855 people in 73 different locations across nine countries (US, UK, India, Japan, Italy, Singapore, Saudi Arabia, Colombia, and Rwanda).

The participants had different ages and backgrounds; some were recruited for their visually interesting occupations, such as bakers, mechanics, carpenters, and landscapers.

Previous data sets typically consisted of semi-scripted video clips only a few seconds long. For Ego4D, participants wore head-mounted cameras for up to 10 hours at a time and captured first-person video of unscripted daily activities, including walking along a street, reading, doing laundry, shopping, playing with pets, playing board games, and interacting with other people. Some of the footage also includes audio, data about where the participants’ gaze was focused, and multiple perspectives on the same scene. It’s the first data set of its kind, says Ryoo.

FAIR has also launched a set of challenges that it hopes will focus other researchers’ efforts on developing this kind of AI. The team anticipates algorithms built into smart glasses, like Facebook’s recently announced Ray-Bans, that record and log the wearers’ day-to-day lives. It means that augmented- or virtual-reality “metaverse” apps could, in theory, answer questions like “Where are my car keys?” or “What did I eat and who did I sit next to on my first flight to France?” Augmented-reality assistants could understand what you’re trying to do and offer instructions or useful social cues.

It’s sci-fi stuff, but closer than you think, says Grauman. Large data sets accelerate the research. “ImageNet drove some big advances in a short time,” she says. “We can expect the same for Ego4D, but for first-person views of the world instead of internet images.”

Once the footage was collected, crowdsourced workers in Rwanda spent a total of 250,000 hours watching the thousands of video clips and writing millions of sentences that describe the scenes and activities filmed. These annotations will be used to train AIs to understand what they are watching.

Where this tech ends up and how quickly it develops remain to be seen. FAIR is planning a competition based on its challenges in June 2022. It is also important to note that FAIR, the research lab, is not the same as Facebook, the media megalodon. In fact, insiders say that Facebook has ignored technical fixes that FAIR has come up with for its toxic algorithms. But Facebook is paying for the research, and it is disingenuous to pretend the company is not very interested in its application.

Sam Gregory at Witness, a human rights organization that specializes in video technology, says this technology could be useful for bystanders documenting protests or police abuse. But he thinks those benefits are outweighed by concerns around commercial applications. He notes that it is possible to identify individuals from how they hold a video camera. Gaze data would be even more revealing: “It’s a very strong indicator of interest,” he says. “How will gaze data be stored? Who will it be accessible to? How might it be processed and used?”

“Facebook’s reputation and core business model ring a lot of alarm bells,” says Rory Mir at the Electronic Frontier Foundation. “At this point many are aware of Facebook’s poor track record on privacy, and their use of surveillance to influence users—both to keep users hooked and to sell that influence to their paying customers, the advertisers.” When it comes to augmented and virtual reality, Facebook is seeking a competitive advantage, he says: “Expanding the amount and types of data it collects is essential.”

When asked about its plans, Facebook was unsurprisingly tight-lipped: “Ego4D is purely research to promote advances in the broader scientific community,” says a spokesperson. “We don’t have anything to share today about product applications or commercial use.”